MapStory

Prototyping Editable Map Animations with LLM Agents

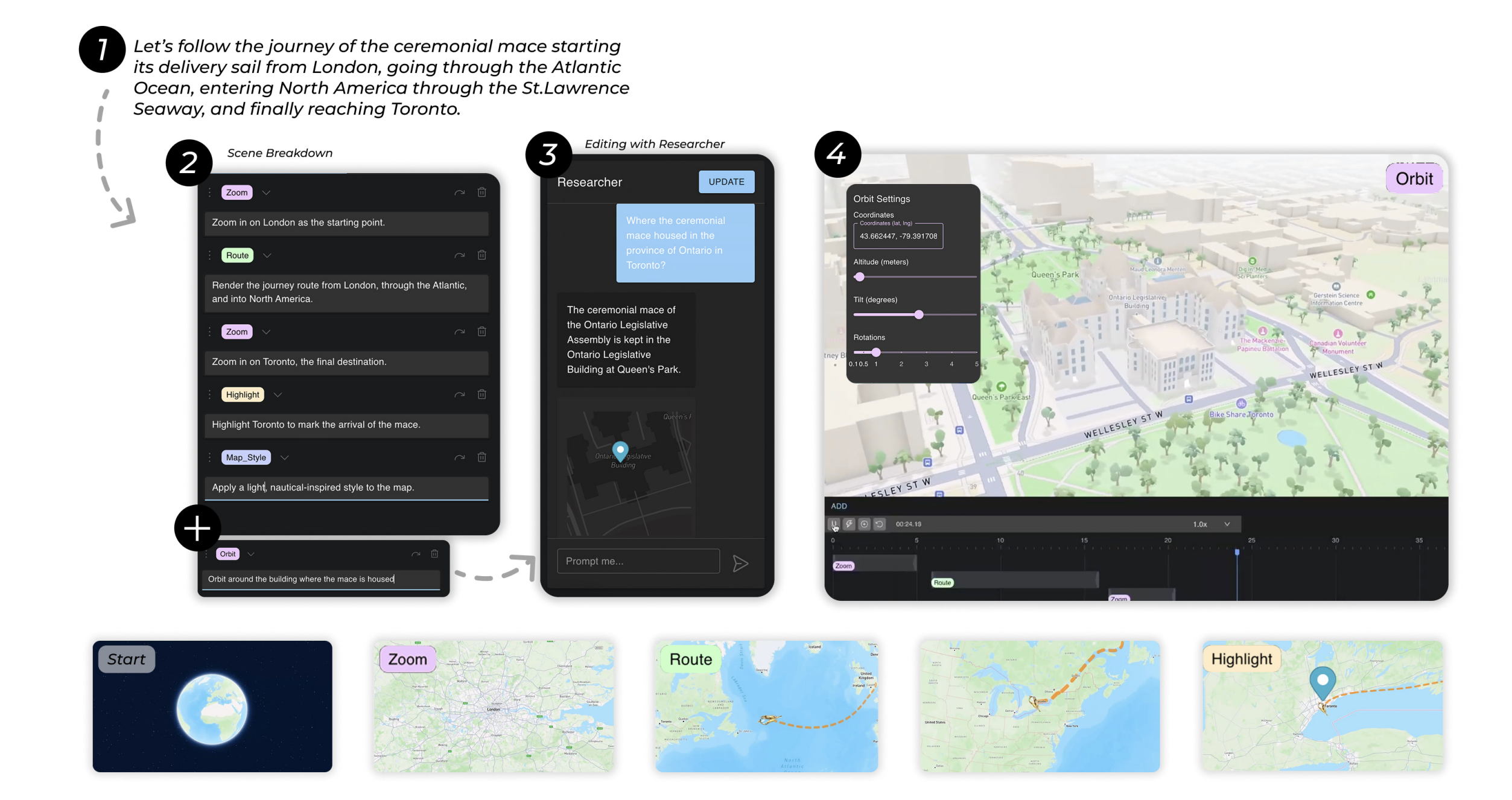

MapStory lets users create animated map narratives through conversation with LLM agents.

Overview

MapStory is a system for creating animated map-based narratives through natural language. Instead of manually scripting camera movements, timing animations, and coordinating visual elements, users describe what they want in plain English and a multi-agent LLM pipeline translates that into fully editable map animations. The system was built in collaboration with Adobe Research and published at UIST 2025 (The 38th ACM Symposium on User Interface Software and Technology) in Busan, South Korea.

How It Works

Natural Language Input

Users describe the map animation they want conversationally. Something like "show a route from Tokyo to Osaka, then zoom into Kyoto" is enough to get started.

LLM Decomposition

The system uses function-calling LLM agents to decompose the user's intent into structured animation commands. Rather than free-form text generation, the LLM selects from predefined animation functions to guarantee valid output.

Geospatial Reasoning

Specialized prompts guide the LLM to interpret geographic descriptions and convert them into map coordinates, camera positions, and element placements.

Animation Generation

The structured commands are compiled into a full animation specification with keyframe timings, camera paths, and synchronized visual elements. Everything renders on a live map canvas.

Iterative Refinement

Users can modify any part of the animation through continued dialogue. The system maintains full state across turns, so edits are incremental rather than regenerating from scratch.

Architecture

MapStory operates through a multi-stage LLM-agent pipeline that converts natural language into structured animation specifications. The architecture separates concerns cleanly: language understanding, spatial reasoning, temporal coordination, and rendering each live in their own module.

LLM Agent Module

Processes user input via function calling. Maps natural language to structured animation commands.

Spatial Reasoner

Converts geographic descriptions to coordinates, camera positions, and element placements on the map.

Animation Controller

Manages playback, keyframe interpolation, and timing synchronization across all animation elements.

State Tracker

Maintains animation configuration and interaction history for coherent multi-turn editing.

Key Contributions

Function-Calling for Structured Animation

Uses LLM function calling to generate valid animation commands rather than free-form text. This guarantees executable output and makes the system composable.

Conversational Authoring Model

Introduces an iterative dialogue pattern where non-expert users can create complex animated map narratives through back-and-forth refinement.

Geospatial-Specific Prompt Design

Custom prompt engineering tailored for map-based storytelling. The LLM reasons about geography, routes, and spatial relationships natively.

Editable Output

Animations are not black-box renders. Every generated element is individually editable, letting users fine-tune camera paths, timing, and visual elements after generation.

Publication

Aditya Gunturu, Ben Pearman, Keiichi Ihara, Morteza Faraji, Bryan Wang, Rubaiat Habib Kazi, Ryo Suzuki. MapStory: Prototyping Editable Map Animations with LLM Agents. The 38th Annual ACM Symposium on User Interface Software and Technology (UIST '25), September 28 – October 1, 2025, Busan, Republic of Korea.

Read the full paper on arXiv →